Last month I had the privilege of being invited to deliver a talk to the Catalan Center for Legal Studies and Specialised Training, a centre for judicial training in Barcelona. The title of talk was ‘AI & the compression of law’, and in it my goal was to debunk the idea of the ‘robot judge’ (always depicted as a glassy white robot figure, either with a blindfold or the scales of justice). Instead, I argued, the worry with the use of AI in law is not the replacement of judges, but rather the subtle reshaping of their activities (and those of other parties in the litigation sphere) by systems whose machine learning underpinnings are geared toward a form of optimisation and relevance that are not necessarily compatible with legal notions of optimality or relevance.

This is a more profound problem than the ‘robot judge’, because its effects are much more subtle – jurists don’t necessarily understand the technical logics underlying the systems they use in their daily practice – but the impact on the Rule of Law is all the more important because of this. Automation bias means jurists will tend to accept the outputs of legal tech systems involved, for example, in search and document drafting, but as we’ve seen in recent debates in Natural Language Processing, large language models (LLMs) are sophisticated pattern matching systems that have no access to the underlying meaning the words represent.

When lawyers take the outputs of such systems as an accurate, valid, or legitimate representation of legal reality, they imbue something that has been generated according to statistical probability with legal legitimacy, and this is potentially corrosive to the ‘creative step’ that lies at the heart of the Rule of Law: the ability to make a new argument that synthesises empirical facts with legal norms in service of the client’s rights and interests. Without that essential aspect of legal practice, the Rule of Law loses its ability to respond to contingent circumstances, and we end up with Holmes’ prediction of the “man of statistics”.

He suggested that the phenomenon of law consists in “the prophecies of what the courts will do in fact, and nothing more pretentious”, my suggestion is that we must avoid law becoming "the prophecies of what legal tech will do in fact, and nothing more pretentious". Since legal technologies mediate legal practice – and always have – their affordances shape that practice. And so, if the underlying design choices reflect a particular logic, as they inevitably will (since technology cannot be neutral), then we have to enquire into what that logic is.

In the case of legal tech built around machine learning, the logic is not legal per se, but instead statistical – it’s about what is likely to come next following a search term or seed text for document generation, on the basis of what patterns of text exist within the training dataset. If lawyers unthinkingly accept such outputs (and automation bias is likely to lead to this, as is the notion of robotomorphy, the inverse of anthropomorphy), we arrive at the antithesis of the Rule of Law, which at its heart must always provide (i) procedural mechanisms that (ii) allow for alternative interpretations of texts such that (iii) reasoned argumentation bridging facts and norms can result in (iv) individuals or collectives receiving the protection of law in specific circumstances, that protection being the underlying spirit that animates and informs this process.

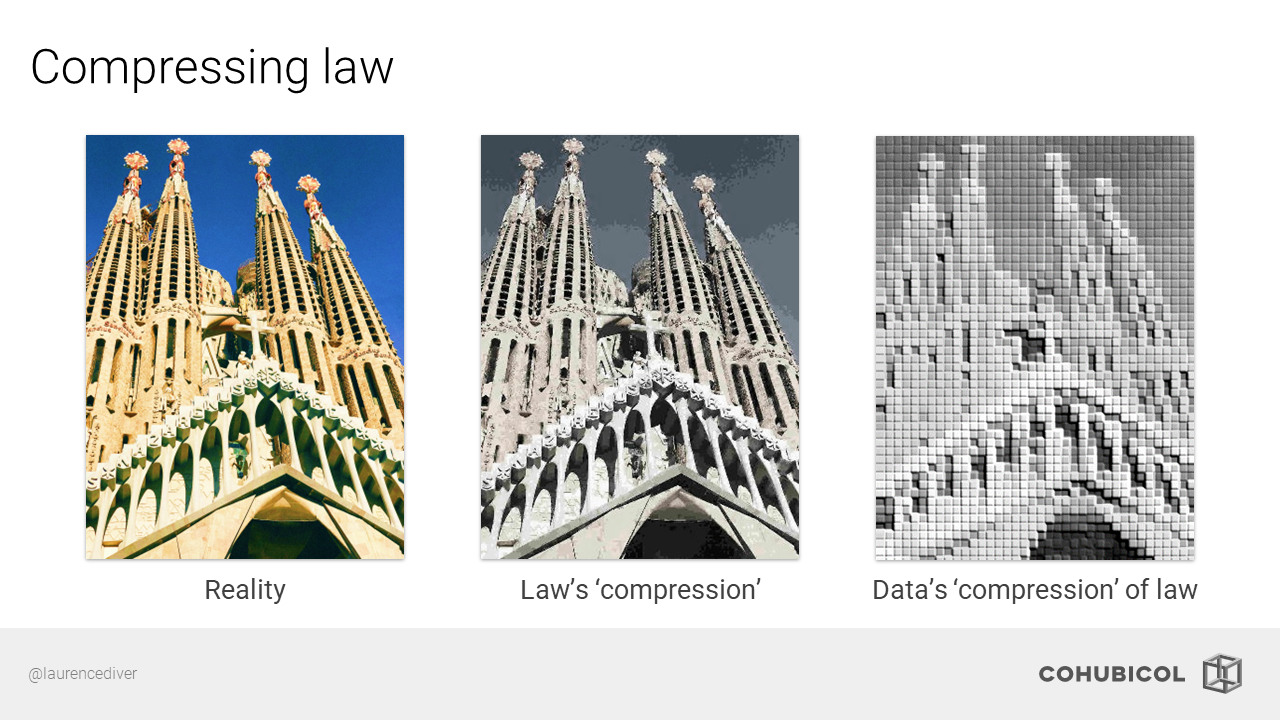

When we rely on datafied abstractions of law – which is already an abstraction of the real world – we are potentially working with something that has ‘compressed’ away important and relevant information to the legal enterprise, and while we can and do work with such abstractions (statistical legal tech does provide usable results), this is paradoxically where the problem sits – by relying on such compressed outputs, we potentially constrict legal flexibility, in ways that might not be apparent to us.

For a related blog post I wrote with Pauline McBride, see ‘High Tech, Low Fidelity? Statistical Legal Tech and the Rule of Law’ on Verfassungsblog. We also have a full paper forthcoming in Communitas.

References

- Oliver Wendell Holmes, ‘The Path of the Law’ (1897) 10 Harvard Law Review 457

- Emily M. Bender, ‘On NYT Magazine on AI: Resist the Urge to be Impressed’ (Medium, 18 Apr 2022)

- Henrik Skaug Sætra, ‘Robotomorphy’ [2021] AI and Ethics. DOI: 10.1007/s43681-021-00092-x